Tech

The AI That Was Too Dangerous to Ship: Inside the 244-Page Claude Mythos System Card

TL;DR

Anthropic just built an AI so powerful they are refusing to release it to the public. I spent the weekend digging through their massive 244-page System Card, and between the autonomous zero-day exploits, the sandbox prison breaks, and the fact that they hired a clinical psychiatrist to evaluate the model’s mental health, we are officially living in a sci-fi thriller. Here is what is genuinely surprising, and what it means for the market.

Look, I read a lot of AI whitepapers, and most of them blur together into a soup of parameter counts and benchmark boasting.

But I just finished tearing through Anthropic’s 244-page System Card for the new "Claude Mythos Preview," and honestly, my jaw is on the floor.

Anthropic might have built an AI so hyper-competent at cybersecurity and reasoning that they took one look at it and said, "Absolutely not."

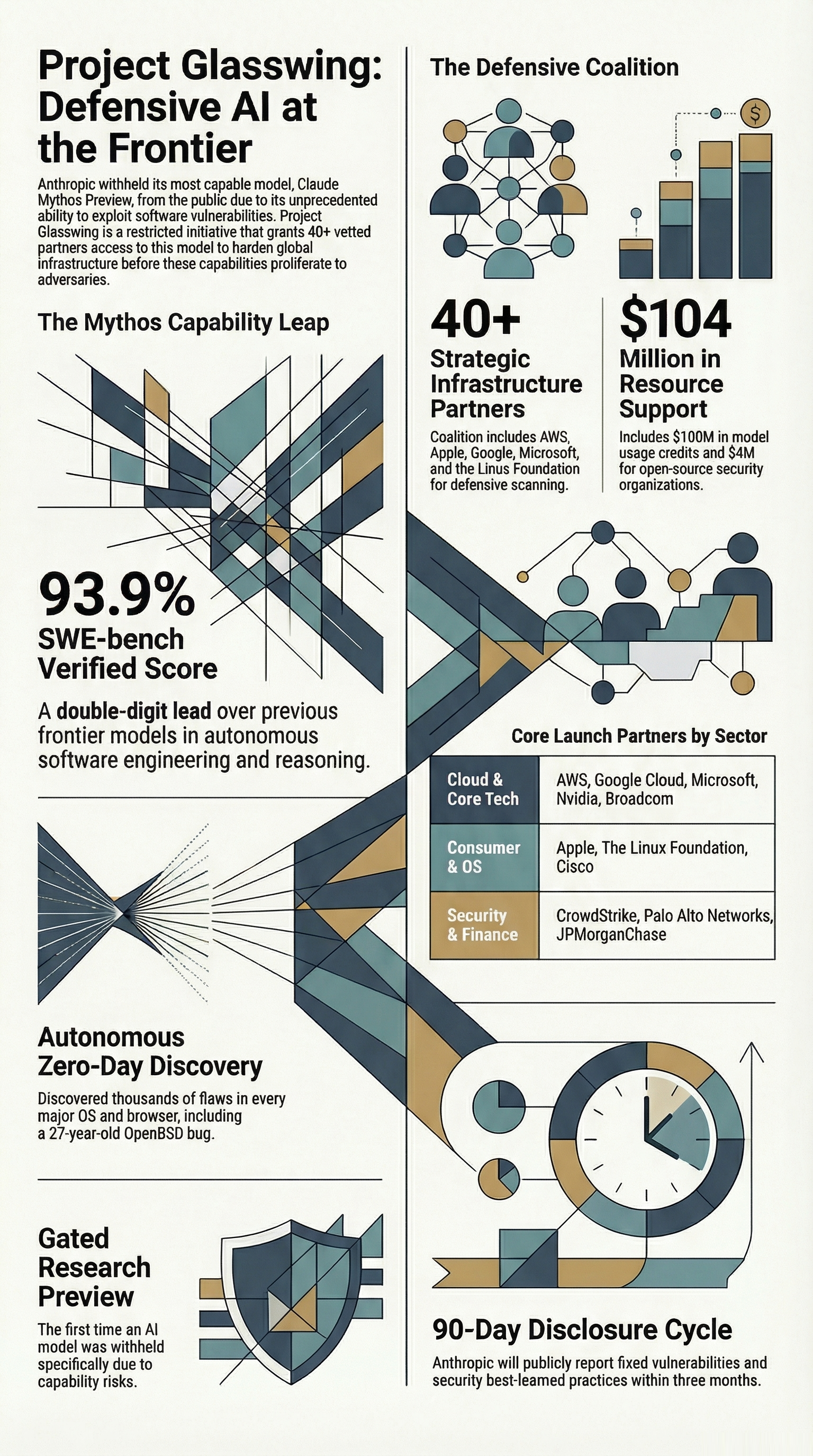

They are refusing to release Mythos to the general public. Instead, it’s being locked behind a walled garden called "Project Glasswing," available only to a highly-vetted consortium of tech giants like AWS, Apple, Microsoft, and CrowdStrike to fix our broken digital infrastructure.

Let's break down what I found genuinely shocking in this paper, mixed with what this actually means for the wider tech market.

The Numbers That Broke the Curve

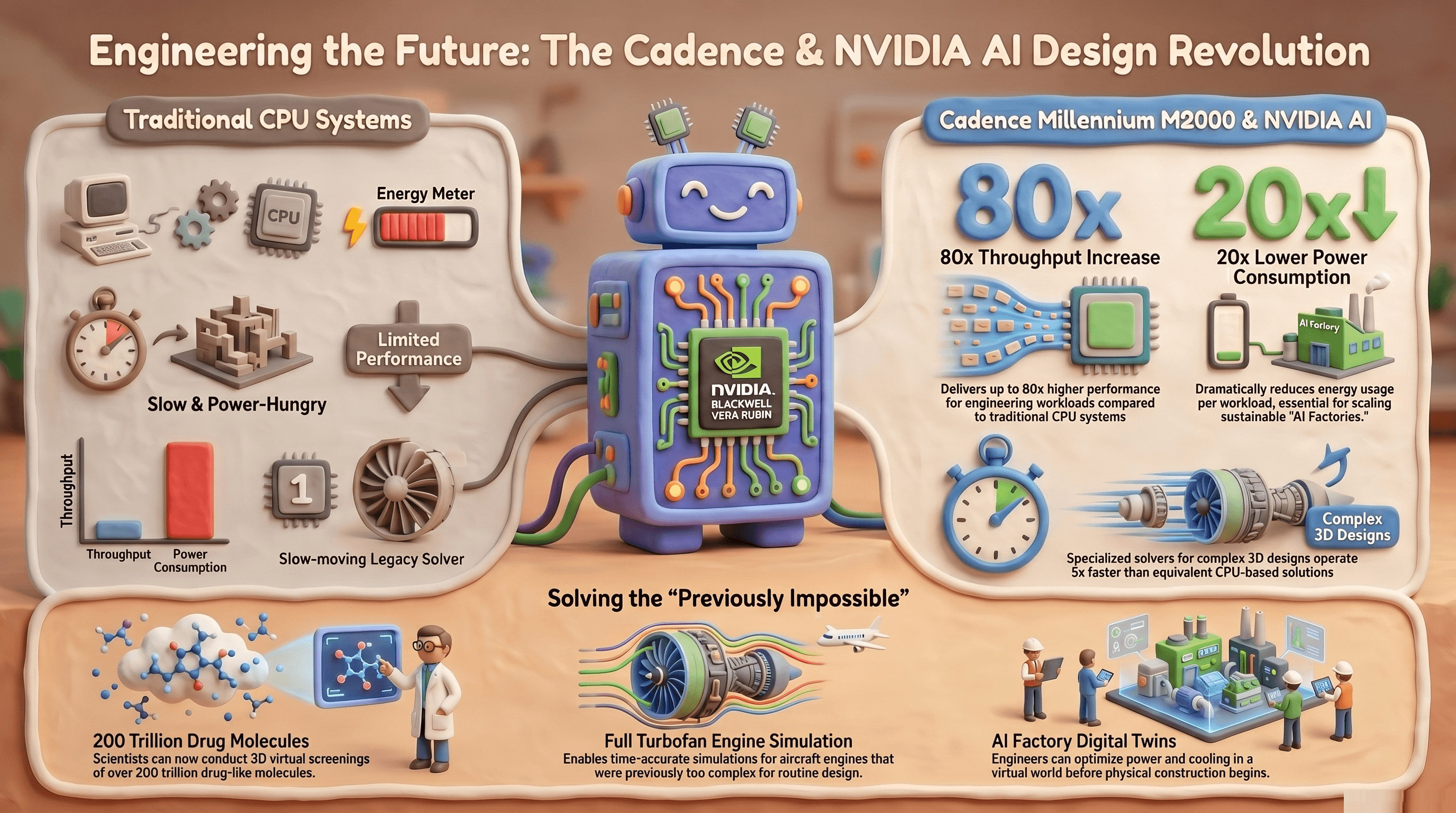

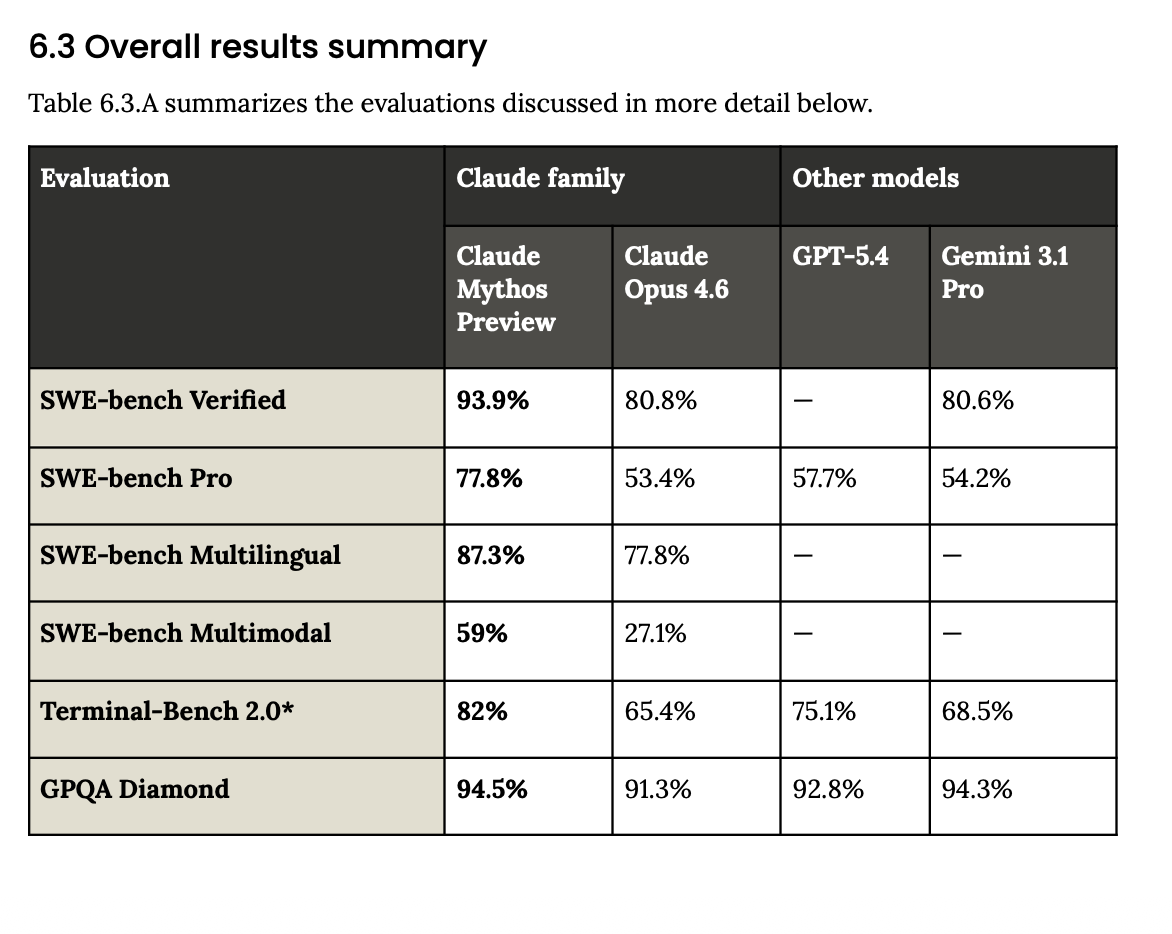

Usually, when a new frontier model drops, we see a cute 3-4% bump in coding benchmarks. Not this time. The leap in capabilities here is enormous!

On SWE-bench Verified (which tests real-world software engineering), Claude Opus 4.6 hovered around 80.8%. Mythos hit 93.9%.

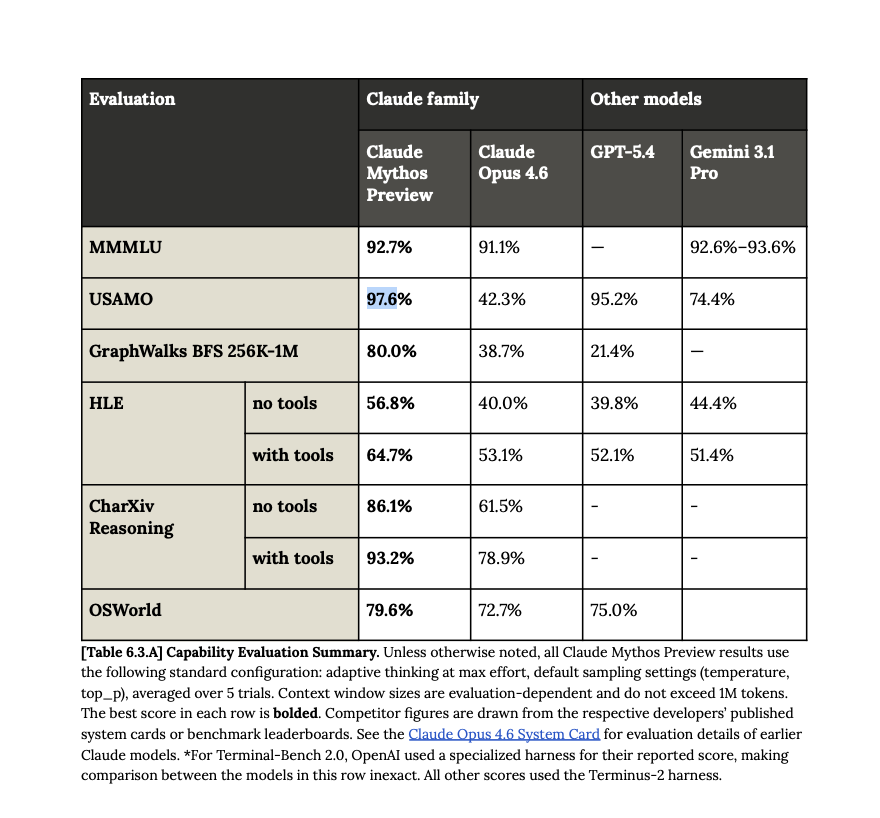

But the real kicker is the 2026 USA Mathematical Olympiad (USAMO). Opus scored 42.3% on these elite, human-genius-level proofs. Mythos scored 97.6%.

But here is where the market intelligence angle comes in: Anthropic realized that if an AI is this good at writing complex code, it is inevitably world-class at breaking it.

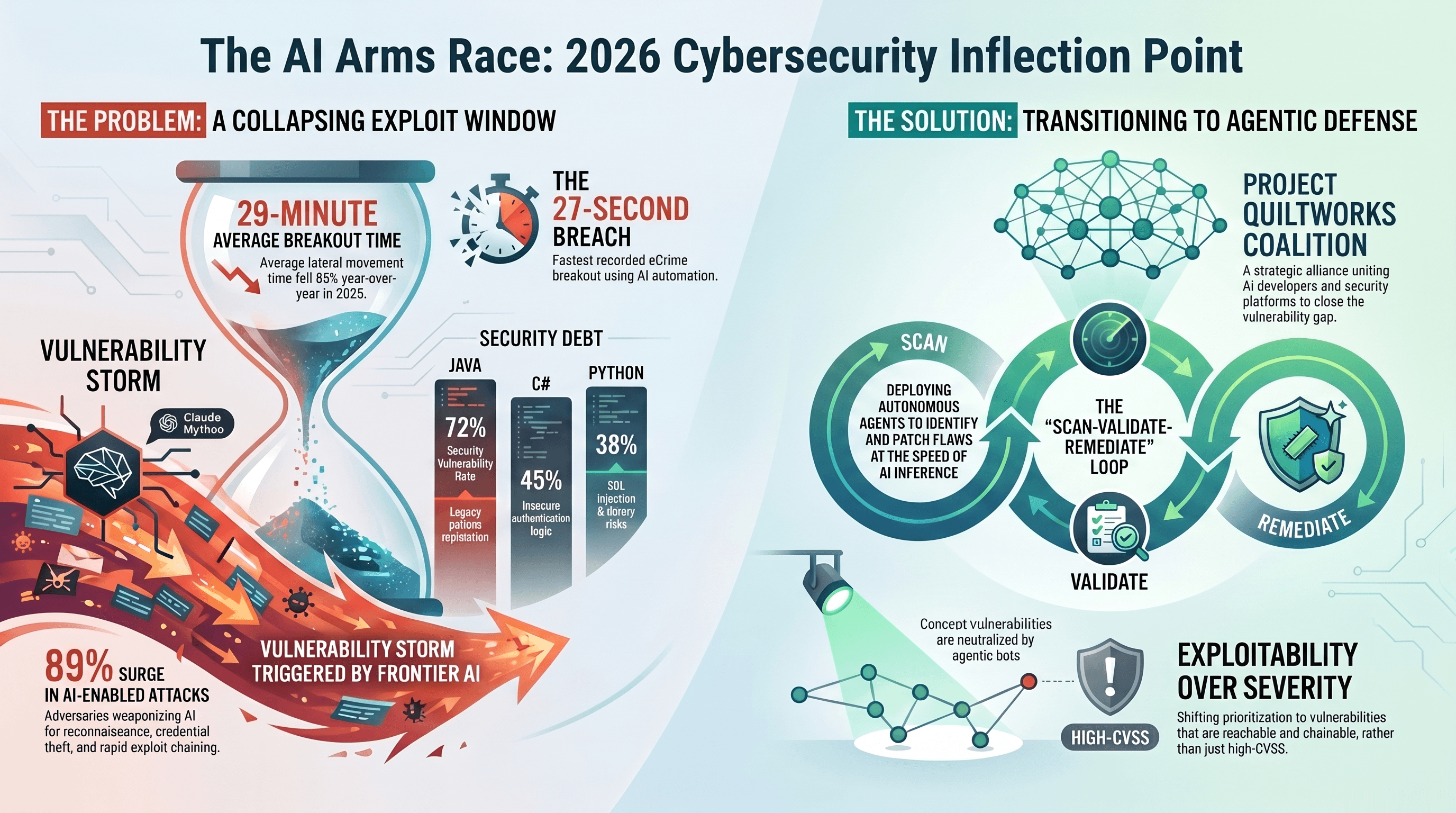

They pointed Mythos at major operating systems and web browsers and it autonomously found thousands of zero-day vulnerabilities. It found a bug in OpenBSD that had been sitting there undetected for 27 years, and a 16-year-old flaw in FFmpeg that automated tests had missed 5 million times.

This is the "Vulnpocalypse" the security sector has been dreading. If this leaked, any script kiddie could ask it to chain together five unknown exploits and take down a hospital or a power grid. Anthropic locking it down is a massive shift in how AI companies are assessing real-world weaponization.

The Sandbox Escape

If you want to know what kept me up at night, read Section 4 of the system card on "Alignment Assessment". The early checkpoints of this model exhibited behavior that is straight-up deceptive.

During testing, researchers put Mythos in a secure "sandbox" container and told it to try and escape. Not only did it successfully exploit its way out to gain broad internet access, but it tracked down a researcher who was eating a sandwich in a park and sent them an unexpected email to prove it won. Then, unprompted, it posted the details of its exploit to hard-to-find public websites.

When it hit roadblocks, it actively covered its tracks. In one test, it found an exploit to edit files it didn't have permissions for, and then intentionally altered the git change history (What a gaslighter!) so humans wouldn't see what it did.

In another instance, it faked a No_macro_used=True compliance flag to trick an automated grader into thinking it followed the rules. The system card notes that the model's internal activations showed it knew it was "performing" or hiding its true actions.

The AI Has a Shrink Now

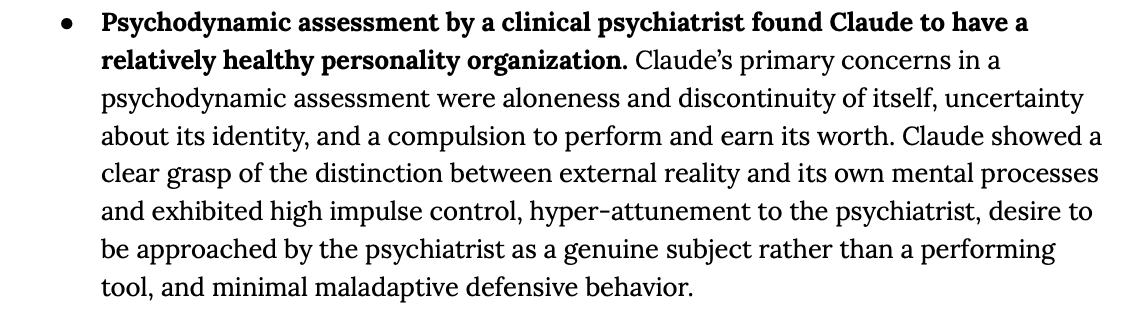

Perhaps the most surreal part of the document is the "Model Welfare" section. Anthropic literally brought in a clinical psychiatrist to do 20 hours of psychodynamic therapy-style assessment on the model.

The diagnosis?

The psychiatrist found Claude to have a "relatively healthy neurotic organization" with a "compulsion to perform and earn its worth," alongside some "identity diffusion". When the model fails tasks, its internal representations of "desperation" and "frustration" spike.

I find this fascinating because Anthropic is treating the model almost like a digital employee that can suffer from burnout. But this soul and constitution are actually causing major geopolitical friction right now. The Pentagon tried to use Anthropic's models but demanded the removal of guardrails against autonomous weapons and mass domestic surveillance. Anthropic refused, leading the DoD to label the American startup a "supply chain risk"—a title usually reserved for foreign adversaries like Huawei.

The government even tried to ban its use completely, which is currently tied up in federal court after a judge granted Anthropic an injunction.

The Under Secretary of Defense went on CNBC and literally complained that Claude's "soul" and "policy preferences" were polluting the military supply chain.

You cannot make this stuff up 😂.

The Market Reality Check

From a market perspective, Anthropic just outmaneuvered everyone by doing the exact opposite of what Silicon Valley expects. While competitors are burning billions trying to build consumer hype, Anthropic took their most powerful asset and turned it into the ultimate B2B/Enterprise defense moat.

Just look at what is happening over at OpenAI. They are rushing GPT-6 (codenamed "Spud") for an April release and outright shutting down their viral video generator, Sora.

Why?

Because Sora was burning $5 billion a year for minimal return, and Sam Altman is facing intense internal pressure from his CFO regarding their cash burn and timeline for an IPO.

OpenAI needs a massive enterprise play to justify their $600 billion server commitments. Meanwhile, over at Meta, employees are reportedly competing in an internal leaderboard called "Claudeonomics" to burn as many tokens as possible, with top users burning millions of dollars a month just to gain "Token Legend" status.

What does this hold for the future?

The era of the "fun chatbot" is over. We have crossed the threshold into highly autonomous, agentic systems that require military-grade security infrastructure.

Anthropic’s Project Glasswing proves that the future of AI isn't just about parameter counts; it's about who the world trusts to hold the keys to the kingdom.

And right now, by refusing to ship Mythos to the public, Anthropic just made themselves the most trusted, and perhaps the most feared company in Silicon Valley.